Mode-Guided Dataset Distillation using Diffusion Models

ICML 2025 Oral (Top 1.0%) — A novel approach for dataset distillation that boosts diversity and performance without fine-tuning.

Authors: Jeffrey A. Chan Santiago1, Praveen Tirupattur1, Gaurav Kumar Nayak2, Gaowen Liu3, Mubarak Shah1

1Center for Research in Computer Vision, University of Central Florida 2Mehta Family School of DS & AI, Indian Institute of Technology Roorkee, India 3Cisco Research

Abstract

Dataset distillation has emerged as an effective strategy, significantly reducing training costs and facilitating more efficient model deployment. Recent advances have leveraged generative models to distill datasets by capturing the underlying data distribution.

Unfortunately, existing methods require model fine-tuning with distillation losses to encourage diversity and representativeness. However, these methods do not guarantee sample diversity, limiting their performance.

We propose a mode-guided diffusion model that leverages a pre-trained diffusion model without the need for fine-tuning using distillation losses. Our approach addresses dataset diversity in three stages: Mode Discovery to identify distinct data modes, Mode Guidance to enhance intra-class diversity, and Stop Guidance to mitigate artifacts in synthetic samples that affect performance.

We evaluate our approach on ImageNette, ImageIDC, ImageNet-100, and ImageNet-1K, achieving accuracy improvements of 4.4%, 2.9%, 1.6%, and 1.6%, respectively, over state-of-the-art methods. Our method eliminates the need for fine-tuning diffusion models with distillation losses, significantly reducing computational costs.

The Task

- Optimization-based Distillation: Learns a synthetic dataset by directly optimizing it to match the gradient or feature statistics of the original dataset.

- Generation-based Distillation: First models the distribution of the original dataset, then generates samples that approximate this learned distribution.

Motivation

The original data distribution (blue dots) highlights denser regions via an orange gradient field. To generate a sample, noise is initially sampled from a standard normal distribution.

- (a) DiT: A pre-trained diffusion model without fine-tuning leads to imbalanced mode likelihoods, resulting in limited sample diversity and frequent repetition of modes.

- (b) MinMax Diffusion: Fine-tunes the model to balance mode likelihoods and improve diversity. However, it still suffers from sample redundancies tied to initial noise conditions.

- (c) MGD³ (Ours): Introduces mode-guided denoising (colored traces), explicitly steering samples toward distinct modes (stars). After k guided steps, it transitions to unguided denoising (black trace), achieving both high diversity and consistency—without requiring any fine-tuning.

Our Method

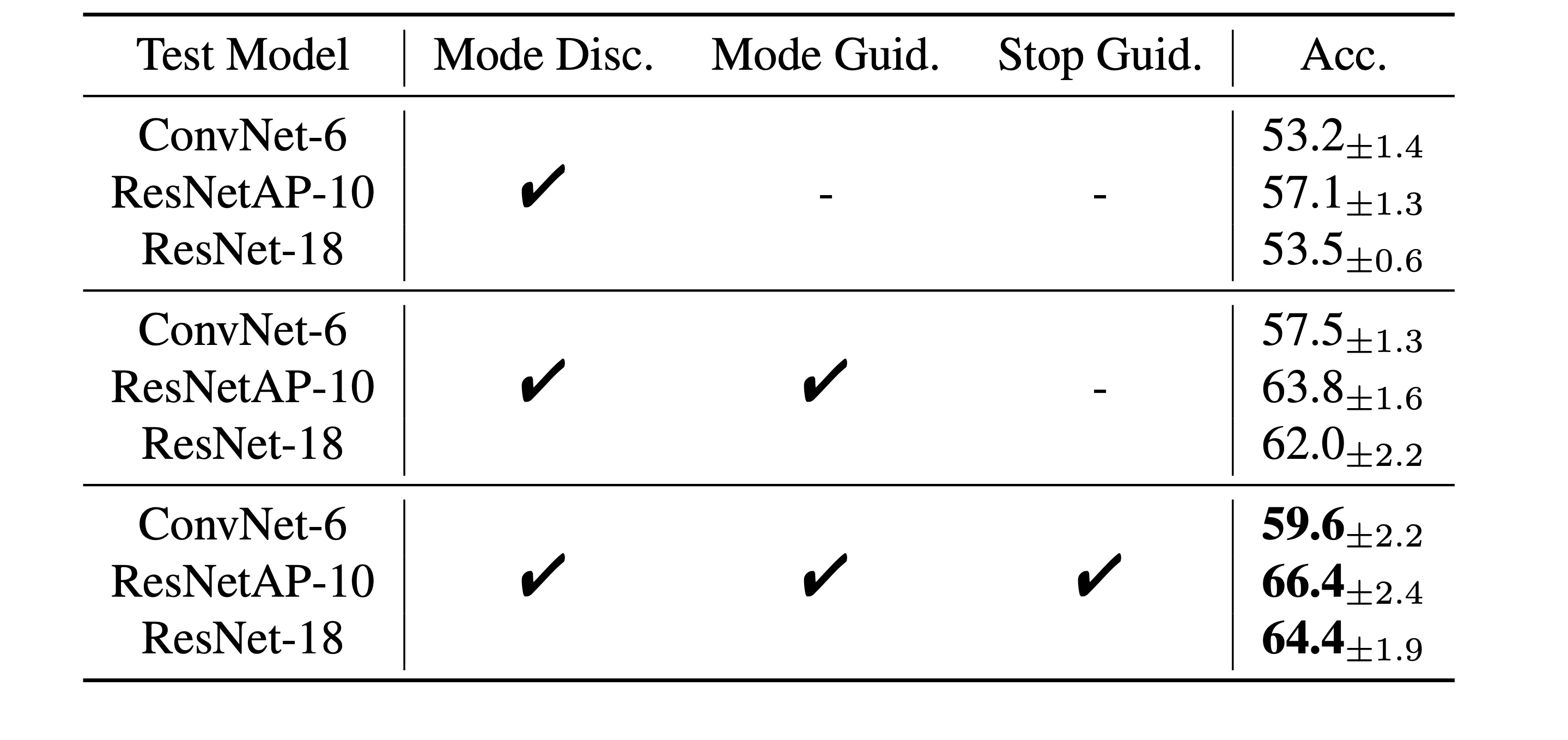

- Mode Discovery: Estimates the N modes of the original dataset in the latent diffusion model’s generative space.

- Mode Guidance: Given a mode m_k and class c, the generation process is steered toward the mode m_k for t_stop denoising steps using the pre-trained model.

- Stop Guidance: After t_stop steps, the model transitions to standard unguided denoising. Without guidance, generations may follow the unguided path, resulting in redundant or overlapping samples.

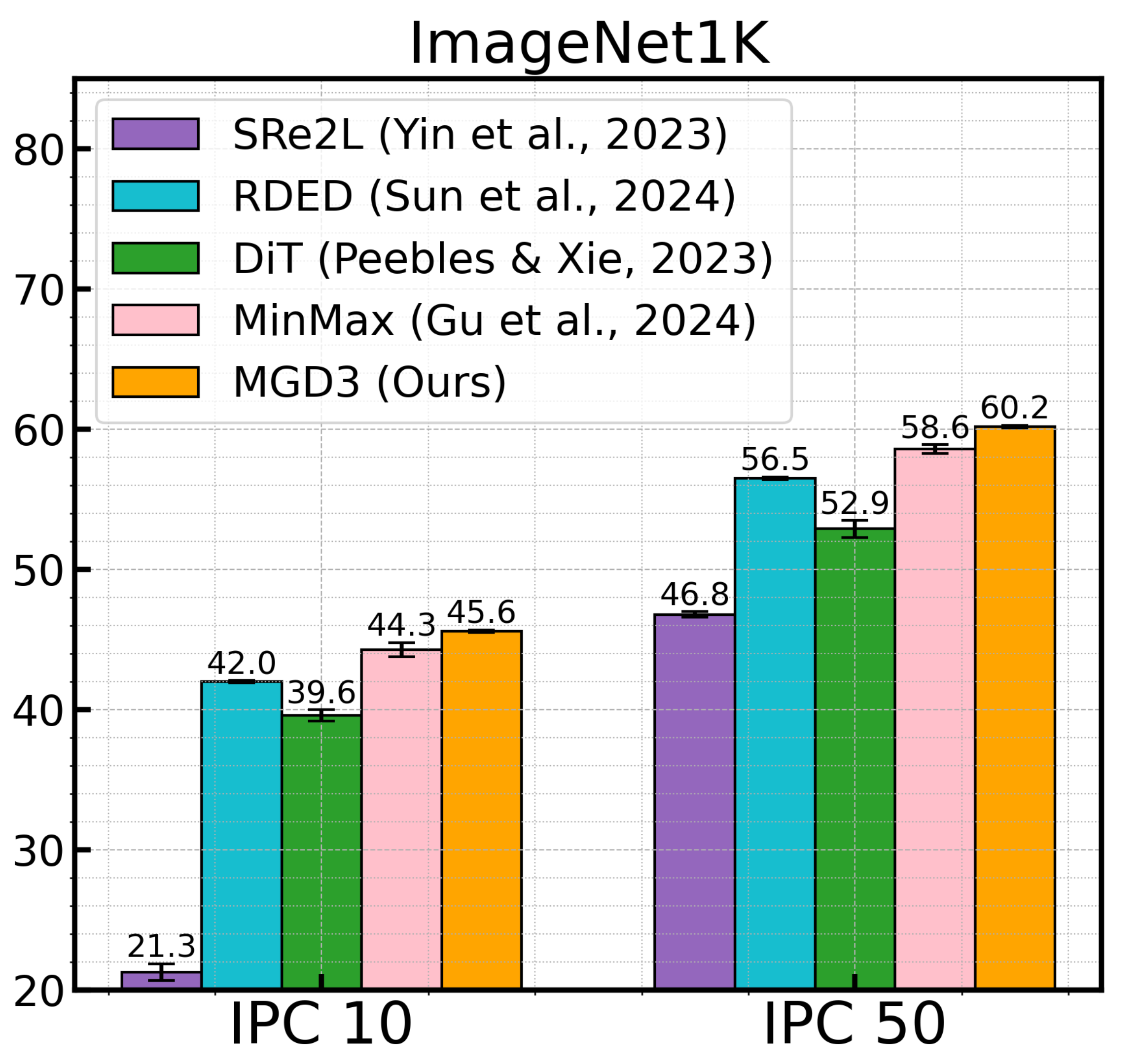

Results

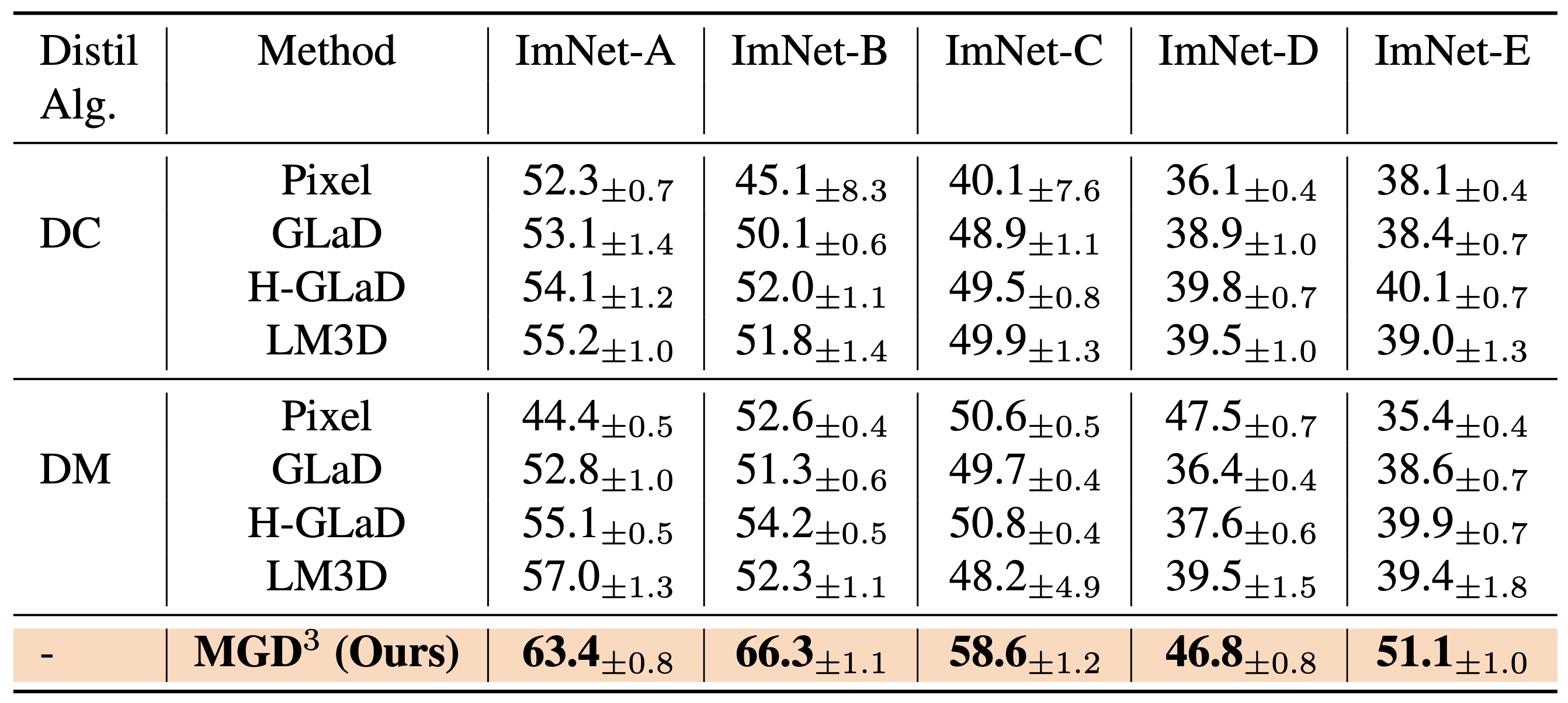

ImageNet Subsets

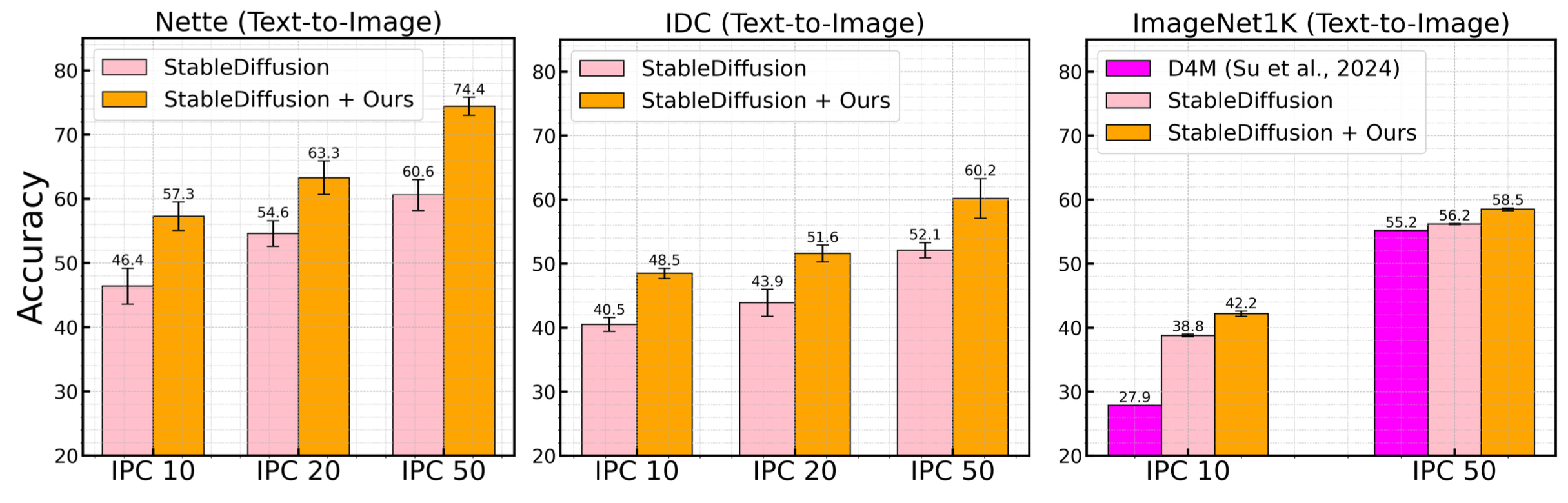

Text-to-Image Diffusions

Ablations

BibTeX

@inproceedings{chan2025mgd3,

title = {{MGD}$^3$: Mode-Guided Dataset Distillation using Diffusion Models},

author = {Chan Santiago, Jeffrey A. and Tirupattur, Praveen and Nayak, Gaurav Kumar and Liu, Gaowen and Shah, Mubarak},

booktitle = {Proceedings of the 42nd International Conference on Machine Learning (ICML)},

year = {2025},

}